- Postico 1 3 2 – A Modern Postgresql Client Create An Ebook

- Postico 1 3 2 – A Modern Postgresql Client Create Account

- Postico 1 3 2 – A Modern Postgresql Client Create An Array

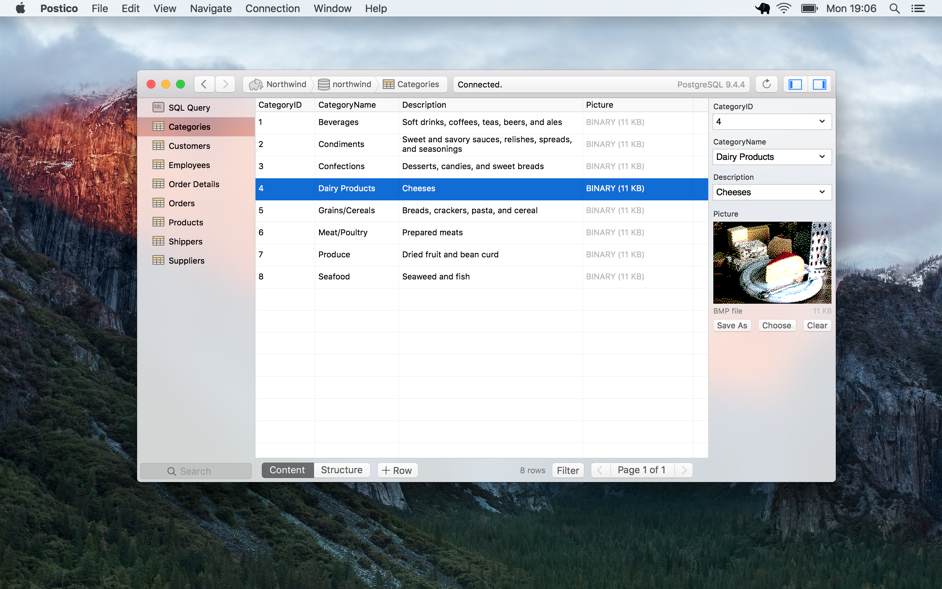

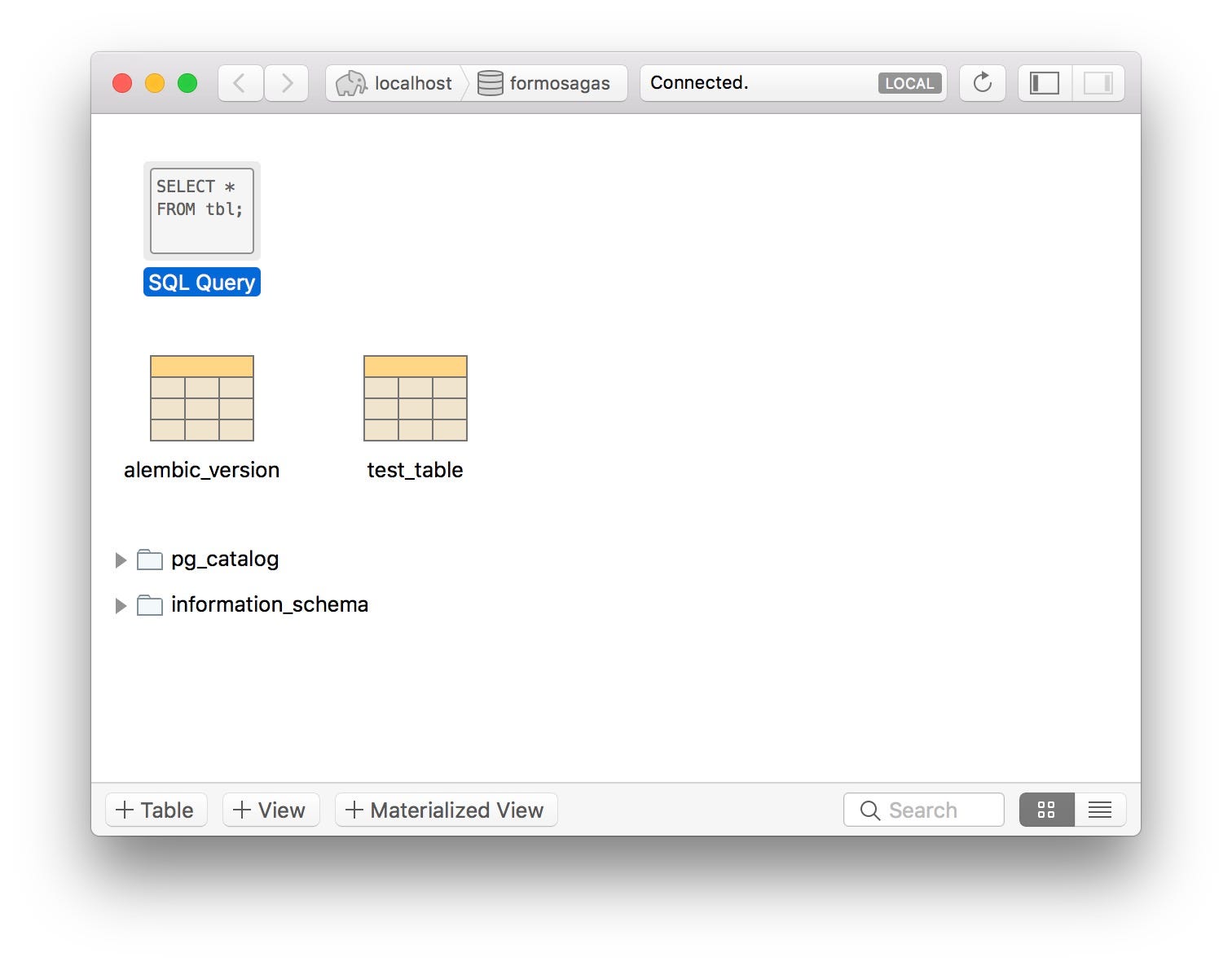

Windows installers Interactive installer by EDB. Download the installer certified by EDB for all supported PostgreSQL versions. This installer includes the PostgreSQL server, pgAdmin; a graphical tool for managing and developing your databases, and StackBuilder; a package manager that can be used to download and install additional PostgreSQL tools and drivers. There are two ways to connect to a local PostgreSQL server: Using a TCP connection (localhost, 127.0.0.1,::1) Using a Unix socket connection (/tmp/.s.PGSQL) Postico always uses TCP connections. Postico can't use socket connections because sandboxed apps are not allowed to access unix sockets outside their sandbox.

Tools to help with designing a schema, via creating Entity-Relationship diagrams and similar. Most are GUI.

List also includes tools to help with 'visualization' or 'documentation' of already existing databases.

For tools to 'run SQL and see its output' see PostgreSQL Clients.

- 1Windows

- 1.1Proprietary

- 2Cross-Platform

- 2.1Open Source (Free)

- 2.2Proprietary

- 3Commandline Tools

- 4Unknown

Windows

Proprietary

pgModeler

Windows / Linux / macOS

This is open source (GPLv3) and can be freely built yourself, if you have Qt available, but downloadable binaries appear to be time-limited demos that can be unlocked with paypal.

Aqua Data Studio

The Aqua Data Studio Entity Relationship Modeler (ER Modeler) helps you design complex database models for all major RDBMS vendors and versions. Use the Forward Engineer feature to model entities and convert them into SQL Scripts, or Reverse Engineer existing databases to visualize a database model.

DeZign

An intuitive database design and modeling tool for developers and DBA's that can help you model, create and maintain databases. The software uses entity relationship diagrams (ERDs, data models) to graphically design databases and automatically generates the most popular SQL and desktop databases.

ERBuilder Data Modeler

ERBuilder Data Modeler is a GUI data modeling tool that allows developers to visualize, design, and model databases by using entity relationship diagrams and automatically generates the most popular SQL databases. Generate and share the data Model documentation with your team. Optimize your data model by using advanced features such as test data generation, schema compare, and schema synchronization.

PostgreSQL Maestro

Toad Data Modeler

Toad Data Modeler enables you to rapidly deploy accurate changes to data structures across more than 20 different platforms. It allows you to construct logical and physical data models, compare and synchronize models, quickly generate complex SQL/DDL, create and modify scripts, as well as reverse and forward engineer both databases and data warehouse systems.

May have free versions? Website is a bit of a wreck.

EMS SQL Manager

Cross-Platform

Open Source (Free)

Kexi

A visual database application creator, c.f. Access or FileMaker. Windows/linux.

Open System Architect

Windows / macOS / Linux / Solaris

OSA currently supports data modelling (physical and logical) with UML in the works.

Postico 1 3 2 – A Modern Postgresql Client Create An Ebook

SQL Power*Architect

Java

The SQL Power Architect data modeling tool was created by data warehouse designers and has many unique features geared specifically for the data warehouse architect. It allows users to reverse-engineer existing databases, perform data profiling on source databases, and auto-generate ETL metadata.

Valentina Studio

Windows / macOS / Linux

Free version supports reverse-engineering an existing schema, but only the proprietary version supports forward-engineering.

Open ModelSphere

Java

Open ModelSphere is a powerful data, process and UML modeling tool - supporting user interfaces in English and French.

Umbrello

Windows / macOS / Linux

Umbrello UML Modeller is a Unified Modelling Language (UML) diagram program based on KDE Technology.

UML allows you to create diagrams of software and other systems in a standard format to document or design the structure of your programs.

ERDesignerNG

Java, GPL

Proprietary

DbSchema

Windows / macOS / Linux / Java

Features interactive diagrams, relational data browse, schema compare and synchronization, query builder, query editor, HTML5 documentation, random data generator, forms and reports.

DbVisualizer

Windows / macOS / Linux / Java

A client that does a lot of things other than schema design. It has a free version that provides many of the features, but not apparently design and DDL export. DbVisualizer is a feature rich, intuitive multi-database tool for developers, database administrators, and increasingly for advanced analysts providing a single powerful interface across a wide variety of operating systems. With its easy-to-use and clean interface, DbVisualizer has proven to be one of the most cost effective database tools available, yet to mention that it runs on all major operating systems and supports all major RDBMS that are available. Users only need to learn and master one application. DbVisualizer integrates transparently with the operating system being used.

DbWrench

Windows / macOS / Linux / Java

Diagramming / Forward & Reverse Engineering

Moon Modeler

Windows / macOS / Linux

Features:

- Database modeling

- Visualization of nested structures and JSON

- SQL script generation

- Reverse engineering

- Support for PostgreSQL specifics, modeling of composite types, hierarchical JSON structures etc.

- GraphQL schema design

StarUML

Windows / macOS / Ubuntu

https://github.com/adrianandrei-ca/staruml-postgresql - extension to support PostgreSQL

(This is version 2. The much older, open source version 1 is available at http://staruml.sourceforge.net/v1/download.php)

Vertabelo

Vertabelo is an online database designer working under Chrome. It free to use for smaller projects and have a proprietary versions for larger database projects.

Features:

- Intuitive HTML5 web interface (Chrome)

- OS independent

- Sharing DB model with team members

- Support for PostgreSQL, MySQL, Oracle, MS SQL Server, DB2, SQLite, HSQLDB,

- Model versioning

- Dynamic/Visual search

- Live model validation

- Reverse engineering

Abris Platform

Web Application for Linux/Windows, requires Apache+PHP or Docker

Abris Platform is an application development platform for creating Web-based front-ends for PostgreSQL databases. Can be used to quickly create applications with convenient forms via SQL declarative description.

Allows to create, alter and drop tables, views, foreign keys, triggers.

Navicat

Windows, macOS, iOS

A general purpose client with good modeling features.

Commandline Tools

Tools that take a description of a database schema in one format and convert it to SQL, and sometimes vice-versa.

SQLFairy

Perl, manipulate structured data definitions (mostly database schemas) in interesting ways, such as converting among different dialects of CREATE syntax (e.g., MySQL-to-Oracle), visualizations of schemas (pseudo-ER diagrams: GraphViz or GD), automatic code generation (using Class::DBI), converting non-RDBMS files to SQL schemas (xSV text files, Excel spreadsheets), serializing parsed schemas (via Storable, YAML and XML), creating documentation (HTML and POD), and more.

DbVisualizer

Windows/OS X/Linux/UNIX

DbVisualizer is a feature rich, intuitive multi-database tool for developers, database administrators, and increasingly for advanced analysts providing a single powerful interface across a wide variety of operating systems. With its easy-to-use and clean interface, DbVisualizer has proven to be one of the most cost effective database tools available, yet to mention that it runs on all major operating systems and supports all major RDBMS that are available. Users only need to learn and master one application. DbVisualizer integrates transparently with the operating system being used.

schemalint

A tool to verify the database schema against Don't Do This recommendations.

Unknown

Autodoc

perl, open source

This is a utility which will run through PostgreSQL system tables and returns HTML, DOT, and several styles of XML which describe the database.

As a result, documentation about a project can be generated quickly and be automatically updatable, yet have a quite professional look if you do some DSSSL/CSS work.

Schema Spy

LGPLv3 Java based console tool to auto-generate documentation in HTML format for an existing database. It uses viz.js or, optionally, Graphviz to render ERD diagrams. It can render markdown for database object comments. Also it allow some basic preprocessing defined with XML configuration file such as implied relationships, column suppression, foreign/remote tables.

SQLFairy

Perl, manipulate structured data definitions (mostly database schemas) in interesting ways, such as converting among different dialects of CREATE syntax (e.g., MySQL-to-Oracle), visualizations of schemas (pseudo-ER diagrams: GraphViz or GD), automatic code generation (using Class::DBI), converting non-RDBMS files to SQL schemas (xSV text files, Excel spreadsheets), serializing parsed schemas (via Storable, YAML and XML), creating documentation (HTML and POD), and more.

DB Doc

Windows/Linux(Wine)

DB Doc helps you document your database structure and objects. Documents can be generated as PDF reports, HTML pages, Microsoft Word (docx) file, or a single compiled HTML file. The layout is fully customizable, and you can quickly view inter-object dependencies using hyperlinks.

MicroOLAP Database Designer

Windows ODBC

Database Designer for PostgreSQL is an easy CASE tool with an intuitive graphical interface allowing you to build a clear and effective database structure visually, see the complete picture (diagram) representing all the tables, references between them, views, stored procedures and other objects. Then you can easily generate a physical database on a server, modify it according to any changes you made to the diagram using fast ALTER statements.

GenMyModel

GenMyModel is an online modeling tool supporting database modeling. It is free to use for smaller projects and have a proprietary version for larger database projects.

Features:

- Intuitive HTML5 web interface (Chrome, Firefox, Safari, Internet Explorer)

- OS independent

- Instant sharing and collaboration

- Customizable SQL generators

- Model versioning

- Live model validation

dbForge Studio for PostgreSQL

ModelRight

WaveMaker

??? Doesn't seem to mention Postgres.

Other Resources

- Community Guide to PostgreSQL GUI Tools miscellaneous utilities

- PostgreSQL Clients GUI SQL clients

- Old possibly abandoned projects, see Community_Guide_to_PostgreSQL_Tools_Abandoned

Retrieved from 'https://wiki.postgresql.org/index.php?title=Design_Tools&oldid=35391'

Originally Posted On : 16 May 2016 Last Updated : 03 Aug 2016Caching

Caching can be considered an important aspect in tuning database system performance.

While this post is mainly focused on postgres, it can be easily compared and understood with other database systems.

Index

What is a cache and why do we need one

Different computer components operate at different speeds. We humans are extremely poor at understanding numbers at the scale that computers do.

Looking at the table below (taken from here) we can have an idea.

The numbers are approximated at human scale.

| Access type | Actual time | Approximated time |

|---|---|---|

| 1 CPU cycle | 0.3 ns | 1 s |

| Level 1 cache access | 0.9 ns | 3 s |

| Level 2 cache access | 2.8 ns | 9 s |

| Level 3 cache access | 12.9 ns | 43 s |

| Main memory access | 120 ns | 6 min |

| Solid-state disk I/O | 50-150 μs | 2-6 days |

| Rotational disk I/O | 1-10 ms | 1-12 months |

| Internet: SF to NYC | 40 ms | 4 years |

| Internet: SF to UK | 81 ms | 8 years |

| Internet: SF to Australia | 183 ms | 19 years |

In a database system, we are mainly concerned about disk I/O.

Magnetic disks are poor for random I/O when compared to their newer counterparts the SSDs.

Most OLTP workloads are random I/O, hence the fetch from the disk can be very slow.

To overcome this, postgres caches data in RAM which can greatly improve performance. Even in the case of SSDs,RAM is much faster.

This general idea of a cache is common to almost all database systems.

Understanding terminologies

Before we move forward, it is necessary to understand certain terminologies.

I suggest to start reading with postgres physical storage.

Once you are done with that, inter db is an another one which goes in a little more depth. In particular, the section about heap tuples.

The official documentation for this is also available, but it is a little hard to getyour head around.

Regardless of the content, postgres has a storage abstraction called a page(8KB in size). The image below gives a rough idea.

This abstraction is what we will be dealing with in the rest of this post.

What is cached?

Postgres caches the following.

- Table dataThis is actual content of the tables.

- IndexesIndexes are also stored in 8K blocks. They are stored in the same place as table data, see Memory areas below.

- Query execution plansWhen you look at a query execution plan, there is the stage called the planning stage, which basically selects the best plan suited for the query.Postgres can cache the plans as well, which is on a per session basis and once the session is ended, the cached plan is thrown away. This can be tricky to optimize/analyze, butgenerally of less importance unless the query you are executing is really complex and/or there are a lot of repeated queries.The documentation explains those in detail pretty well. We can query

pg_prepared_statementsto see what is cached. Note thatit is not available across sessions and visible only to the current session.

We will explore how table data and indexes are cached in detail further in this post.

Memory areas

Postgres has several configuration parameters and understanding what they mean is really important.

For caching, the most important configuration is the shared_buffers.

Internally in the postgres source code, this is known as the NBuffers, and this where all of the shared data sits in the memory.

The shared_buffers is simply an array of 8KB blocks.Each page has metadata within itself to distinguish itself as mentioned above. Before postgres checks out the data from the disk,it first does a lookup for the pages in the shared_buffers, if there is a hit then it returns the data from there itself and thereby avoiding a disk I/O.

The LRU/Clock sweep cache algorithm

The mechanisms involved in putting data into a cache and evicting from them is controlled by a clock sweep algorithm.

It is built to handle OLTP workloads, so that almost all of the traffic are dealt with in memory.

Let’s talk about each action in detail.

Buffer allocation

Postgres is a process based system, i.e each connection has a native OS process of its own which is spawned from the postgres root process(previously called postmaster).

When a process requests for a page in the LRU cache (this is done whenever that page is accessed via a typical SQL query), it requests for a buffer allocation.

If the block is already in cache, it gets pinned and then returned. The process of pinning is a way to increase the usage count discussed below. A page is said to be unpinnedwhen the usage count is zero.

Only if there are no buffers/slots free for a page, then it goes for buffer eviction.

Buffer eviction

Deciding which pages should be evicted from memory and written to disk is a classic computer science problem.

A plain LRU(Least Recently Used) algorithm does not work well in reality since it has no memory of the previous run.

Postgres keeps track of page usage count, so if a page usage count is zero, it is evicted from memory and written to disk. It is also written to disk when the page is dirty (see below).

Regardless of the nitty-gritty details, the cache algorithm by itself requires almost no tweaking and is much smarter than what people would usually think.

Dirty pages and cache invalidation

We were talking about select queries till now, what happens to DML queries?

Simple, they get written to the same pages. If present in memory, then they are written to it or else they are fetched from disk and then written to it.

This is where the notion of dirty pages come in, i.e a page has been modified and has not been written to disk.

Here is some more homework/study to be done, before we proceed, in particular about WAL and checkpoints.

WAL is a redo log that basically keeps track of whatever that is happening to the system. This is done by logging all changes separately to a WAL Log. Checkpointer is a processwhich writes the so called dirty pages to disk periodically and controlled by a time setting. It does so, because when the database crashes it does not need to replay everything from scratch.

This is the most common way of pages getting evicted from memory, the LRU eviction almost never happens in a typical scenario.

Understanding caches from explain analyze

Explain is a wonderful way to understand what is happening under the hoods. It can even tell how much data blocks came from disk and how much came from shared_buffersi.e memory.

A query plan below gives an example,

Shared read, means it comes from the disk and it was not cached. If the query is run again, and if the cache configuration is correct (we will discuss about it below),it will show up as shared hit.

It is very convenient this way to learn about how much is cached from a query perspective rather than fiddling with the internals of the OS/Postgres.

The case for sequential scans

A sequential scan i.e when there is no index and postgres has to fetch all the data from disk are a problem area for a cache like this.

Since a single seq scan can wipe all of the data from a cache, it is handled differently.

Instead of using a normal LRU/clock sweep algorithm, it uses a series of buffers of total 256 K.B in size. The below plan shows how it is handled.

Executing the above query again.

We can see that exactly 32 blocks have moved into memory i.e 32 * 8 = 256 KB. This is explained in the src/backend/storage/buffer/README

Memory flow and OS caching

Postgres as a cross platform database, relies heavily on the operating system for its caching. Cookie 5 more privacy better browsing 5 5 5.

The shared_buffers is actually duplicating what the OS does.A typical picture of how the data flows through postgres is given below.

This is confusing at first, since caching is managed by both the OS and postgres as well, but there are reasons to this.

Talking about operating system cache requires another post of its own, but there are many resources on the net which can leveraged.

Keep in mind that the OS caches data for the same reason we saw above, i.e why do we need a cache ?

We can classify the I/O as two types, i.e reads and writes. To put it even more simpler, data flows from disk to memory for reads and flows from memory to disk for writes.

Reads

For reads, when you consider the flow diagram above, the data flows from disk to OS cache and then to shared_buffers. We have already discussed how the pages will getpinned on to the shared_buffers until they get dirty/unpinned.

Sometimes, both the OS cache and shared_buffers can hold the same pages. This may lead for space wastage, but remember the OS cache is using a simple LRU and not a databaseoptimized clock sweep. Once the pages take a hit on shared_buffers, the reads never reach the OS cache, and if there are any duplicates, they get removed easily.

In reality, there are not much pages which gets stacked on both the memory areas.

This is one of the reasons why it is advised to size the shared_buffers carefully. Using hard and fast rules such as giving it the lion’s share of the memory orgiving it too little is going to hurt performance.

We will discuss more on optimization below.

Writes

Writes flow from memory to disk. This is where the concept of dirty pages come in.

Once a page is marked dirty, it gets flushed to the OS cache which then writes to disk. Castle of illusion starring mickey mouse download free. This is where the OS has more freedom to schedule I/O based on the incoming traffic.

As said above, if the OS cache size is less, then it cannot re-order the writes and optimize I/O. This is particularly important for a write heavy workload. So the OS cache sizeis important as well.

Initial configuration

As with many database systems, there is no silver bullet configuration which will just work. PostgreSQL ships with a basic configuration tuned for wide compatibility rather than performance.

It is the responsibility of the database administrator/developer to tune the configuration according to the application/workload.However, the folks at postgres have a good documentation of where to start

Once the default/startup configuration is set. We can do load/performance testing to see how it is holding up.

Keep in mind that the initial configuration is also tuned for availability rather than performance, it is better to always experiment and arrive at a config that is moresuitable for the workload under consideration.

Optimize as you go

If you cannot measure something, you cannot optimize it

With postgres, there are two ways you can measure.

Operating system

While there is no general consensus on which platform postgres works best, I am assuming that you are using something in the linux family of operating systems. But the ideais kind of similar.

To start with, there is a tool called Io top which can measure disk I/O. Similar to top, this can come in handywhen measuring disk I/O. Just run the command

iotop to measure writes/reads.This can give useful insights into how postgres is behaving under load i.e how much is hitting the disk and how much is from RAM which can be arrived based on the loadbeing generated.

Directly from postgres

It is always better to monitor something directly from postgres,rather than going through the OS route.

Typically we would do OS level monitoring if we believe that there is something wrong with postgres itself, but this is rarely the case.

With postgres, there are several tools at our disposal for measuring performance with respect to memory.

- The default is SQL explain.Gives more information than any other database system, but a little hard to get your head around. Needs practice to get used to.Don’t miss the several useful flags, that can be given especially buffers which we previously saw.Check out the below links to understand explain in depth.

- Query logs are another way to understand what is happening inside the system.Instead of logging everything, we can log only queries that cross certain duration or otherwise called slow query logs using the log_min_duration_statement parameter.

- This is another cool thing that you can do which will automatically log the execution plan along with the slow queries.Very useful for debugging without having the need to run explain by hand.

- The above methods are good, but lack a consolidated view.This is a module built within postgres itself, but disabled by default.We can enable this by doing

create extension pg_stat_statementsOnce this is enabled, after a fair amount of queries are run, then we can fire a query such as below.

Gives lot of details on how much time queries took and their average.

The disadvantage with this approach is it takes some amount of performance, so it is not generally recommended in production systems.

- PG Buffer cache and PG fincoreIf you want to get a little deeper, then there are two modules which can dig directly into shared_buffers and OS cache itself.An important thing to note is that the explain (analyze,buffers) shows data from shared_buffers only and not from the OS cache.

- PG buffer cacheThis helps us see the data in shared buffers in real time. Collects information from shared_buffers and puts it inside of pg_buffercache for viewing.A sample query goes as below, which lists the top 100 tables along with the number of pages cached.

- PG fincoreThis an external module, that gives information on how the OS caches the pages. It is pretty low level and also very powerful.

- This is an in built module that can actually load the data into shared_buffers/OS cache or both. If you think memory warm up is the problem, then this is pretty usefulfor debugging.

There are several more, but I have listed the most popular and easy to use ones for understanding postgres cache and also in general. Armed with these tools, thereare no more excuses for a slow database because of memory problems

References

Tagged Under

Postico 1 3 2 – A Modern Postgresql Client Create Account

Search this website..

Keeping up with blogs..

I blog occasionally and mostly write about Software engineering and the likes.If you are interested in keeping up with new blog posts, you should follow me on twitter where I usually tweet when I publish them.You can also use the RSS feed , or even subscribe via email below.

Share & Like

If you like this post, then you can either share/discuss/vote up on the below sites.Thoughts ..

Postico 1 3 2 – A Modern Postgresql Client Create An Array

Please feel free to share your comments below for discussion

Please enable JavaScript to view the comments powered by Disqus.Blog comments powered by Disqus